In recent years, there has been a significant advancement in the field of Artificial Intelligence (AI) and Augmented Reality (AR). These technologies have become increasingly popular and have the potential to enhance virtual experiences in various fields such as gaming, education, healthcare, and...

An Algorithm Helps Deaf People Enjoy Movies Through Tactile Sensations

For many people, movies are defined by sound as much as by visuals. Music builds tension, dialogue conveys emotion, and sound effects create immersion. For deaf and hard-of-hearing individuals, however, much of this sensory experience is inaccessible. Subtitles provide context, but they cannot fully capture the richness of audio. A new algorithm seeks to bridge this gap by translating sound into tactile sensations, allowing users to physically feel movies in a deeply engaging way.

The Limitations of Traditional Accessibility Tools

Subtitles and sign language interpretation have long been the primary methods for making films accessible. While effective for conveying dialogue, they do not reproduce the emotional and atmospheric aspects of sound.

For example, a sudden explosion, a subtle musical shift, or the rhythm of a soundtrack cannot be fully expressed through text alone.

Challenges Faced by Deaf Viewers

- Loss of emotional nuance from audio cues

- Limited representation of sound effects

- Difficulty experiencing rhythm and pacing

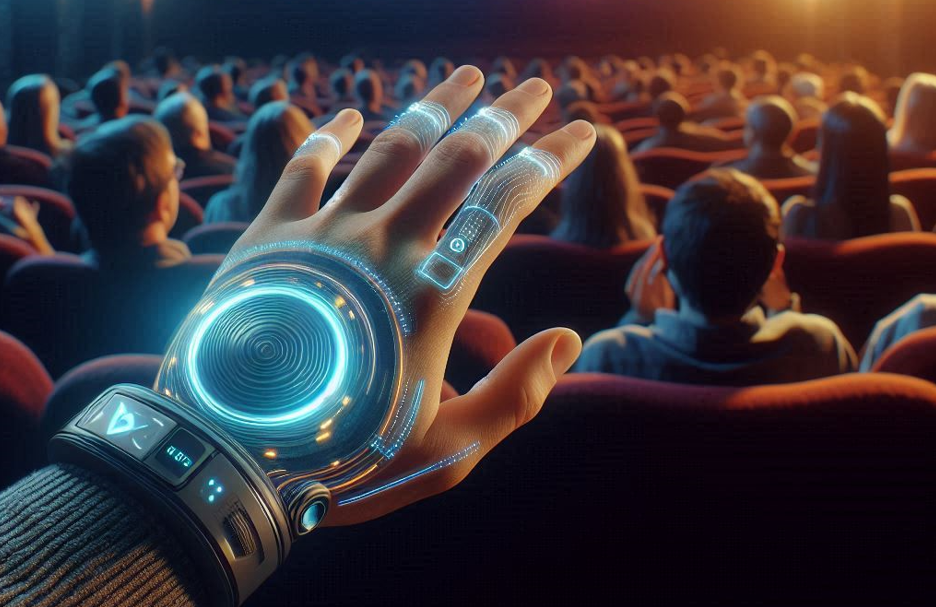

The Concept of Tactile Cinema

The algorithm introduces a new dimension to film accessibility by converting audio signals into physical vibrations. These vibrations are delivered through wearable devices or specially designed seating systems.

This approach allows viewers to perceive sound through touch, creating a multisensory experience that complements visual content.

Key Components

- Audio analysis engine

- Haptic feedback devices

- Real-time signal processing system

How the Algorithm Translates Sound

The system analyzes the audio track of a film and breaks it down into components such as frequency, amplitude, and rhythm. These elements are then mapped to tactile patterns.

Low-frequency sounds may be represented as deep vibrations, while higher frequencies produce lighter, more rapid sensations.

Processing Steps

- Extraction of audio features

- Classification of sound types

- Mapping to tactile patterns

- Delivery through haptic devices

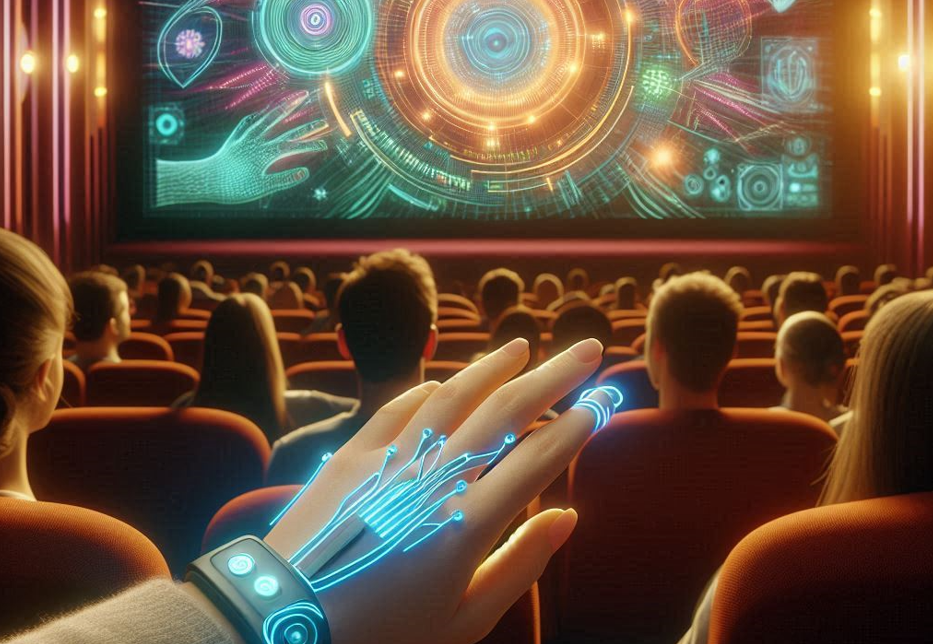

Creating an Immersive Experience

The goal is not simply to replicate sound, but to create an equivalent sensory experience. For instance, a heartbeat in a suspense scene might be translated into rhythmic pulses felt through a wearable device.

Action sequences can be experienced as dynamic waves of vibration, enhancing the sense of movement and intensity.

Examples of Tactile Representation

- Explosions as strong, sudden pulses

- Music as rhythmic vibration patterns

- Dialogue as subtle, localized sensations

Applications Beyond Entertainment

While primarily designed for movies, the technology has broader applications. It can be used in gaming, virtual reality, and even live performances.

Educational content can also benefit, providing new ways to convey information through multisensory experiences.

Challenges and Ethical Considerations

Designing effective tactile representations requires careful consideration. Overly intense vibrations may be uncomfortable, while subtle signals may be difficult to perceive.

Key Challenges

- Balancing intensity and comfort

- Ensuring accurate representation of sound

- Adapting to individual preferences

The Future of Inclusive Media

This technology represents a significant step toward more inclusive media experiences. By expanding the ways in which content can be perceived, it opens new possibilities for accessibility.

As tactile interfaces become more advanced, the boundary between sound and touch may continue to blur, creating richer and more inclusive forms of storytelling.